Problems with AB testing

App Store Optimization

👨🏻💻 UX Designer, self-employed at NEXT PLANET - small design studio. 👨🏻💻 Indie iOS developer 📱 Creator of: Moons, Numi, Skoro, Wins and Emo. 🎸Guitarist at ZERO and Quadroom 🎨 MA in art 🌱 Vegan

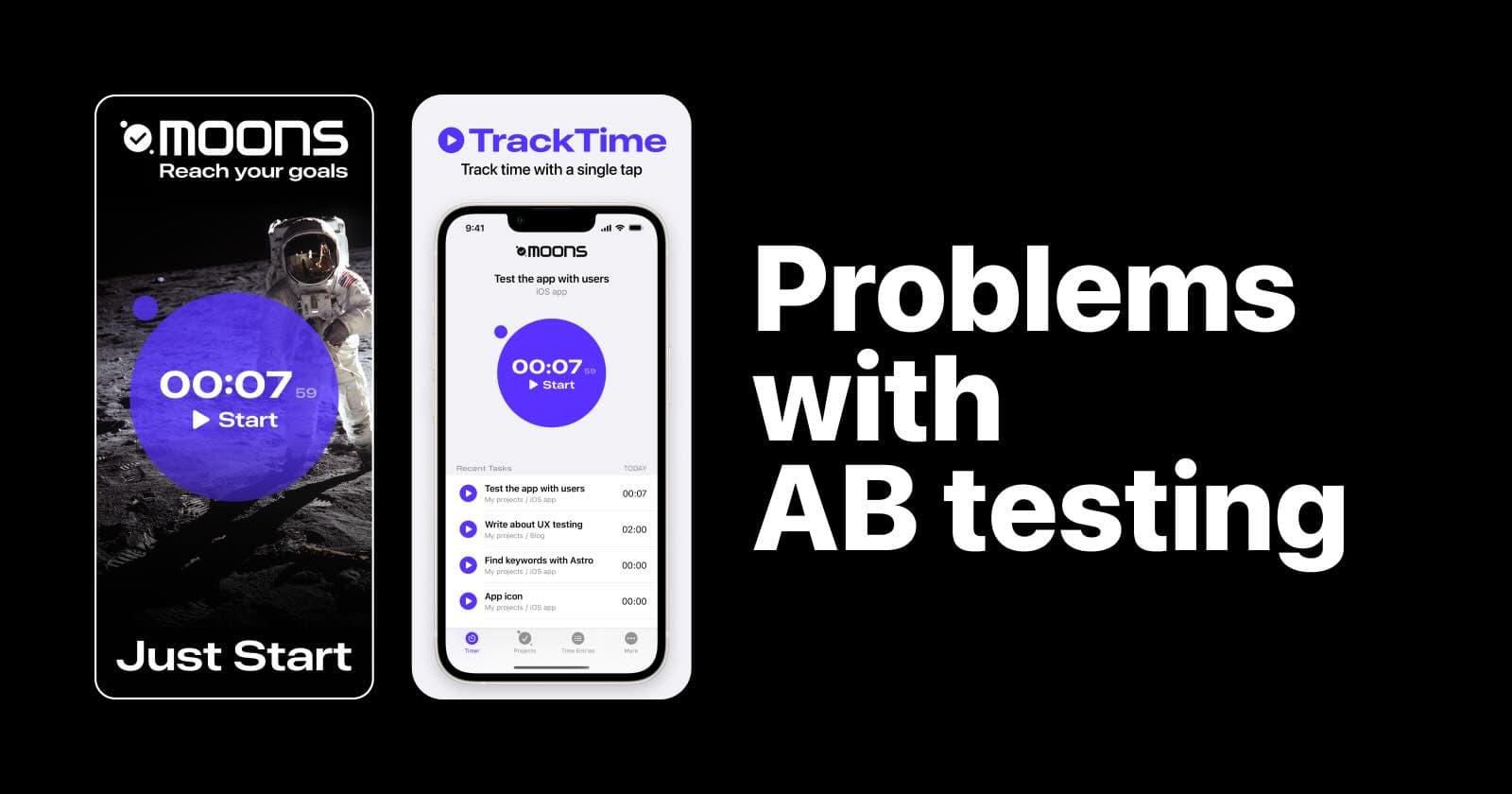

Two months ago, I decided to run an AB test for one of my iOS apps, a time tracker named Moons. It's a free-to-download app with basic functionality available free of charge and some pro features behind a paywall. I wanted to check how changing my app screenshots would affect my app's Conversion Rate.

To clarify, here is how Apple defines this metric on the App Store Connect where we can run such tests:

"The estimated percentage of users who download or pre-order the app after seeing this variant of the product page."

This basically means that I want to improve Moon's Product Page so that more people download the app. Whether these people decide to pay for the pro features later is a different story. Here, I simply want to increase the number of downloads to have more potential customers.

Hypothesis

My idea is simple. I will add some cool new image to my product page to make my app stand out from the crowd. This image will resonate with users on an emotional level, inspiring them to achieve their goals and boost their productivity. This will result in increased downloads and, ideally, more paying customers.

Simple and brilliant and the question is not IF but HOW EFFECTIVE it will be.

Creative Screenshots

Apple calls them Screenshots on the App Store Connect. But in the context of App Store Optimization, they are often called Creatives. Basically, they are images people see when they are searching for apps on the App Store.

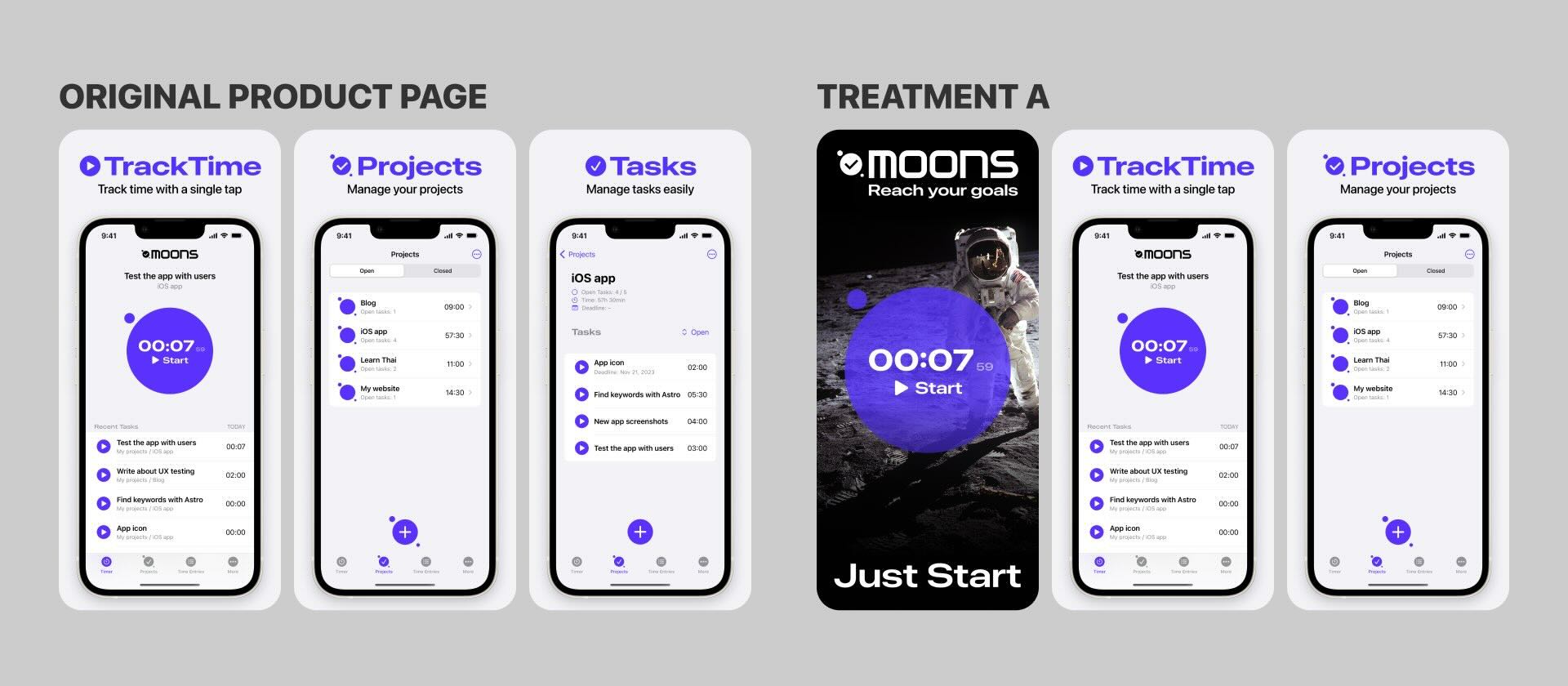

I decided to get creative with my Creatives 🙃 And here's the result: an astronaut on the moon, a few catchy marketing slogans and a glimpse of my app's UI in the foreground. Pretty cool, isn't it?

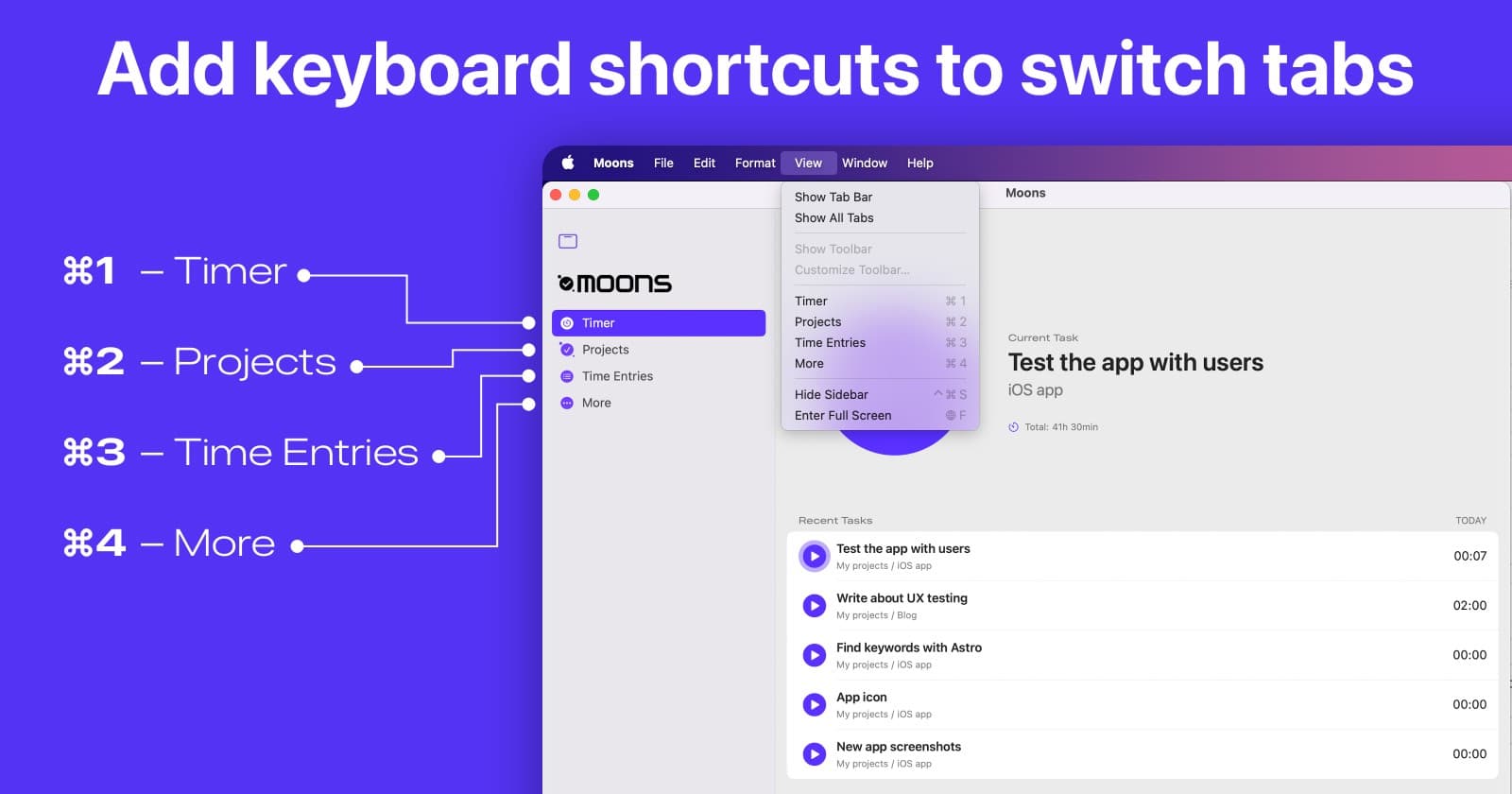

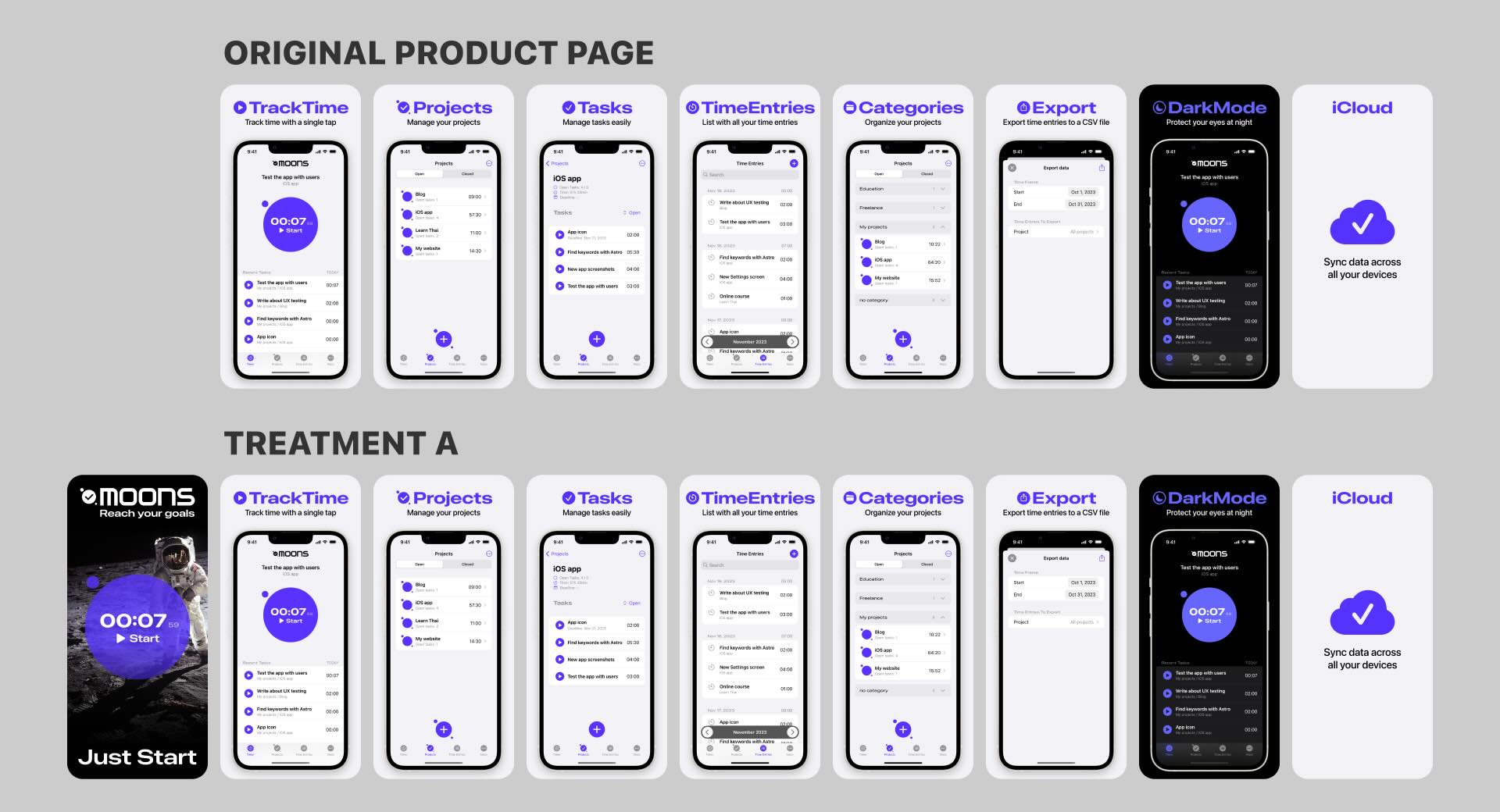

I wanted to include this particular image in my creatives. Here's a comparison between my Original Product Page and Treatment A (this is Apple's term for sets of images used in AB tests, which you'll see in the results later in this article).

Why does adding or changing just one image matter? Because users typically see the first three creatives for each app in the search results. Therefore, to improve the initial step of my sales funnel - the search results - I've decided to test these two variants:

AB testing on family and friends

I showed this new creative to some friends and people in my family. I heard things like:

"Smart marketing!"

"Great idea! It tells people that with your app they can reach their goals. And anything is possible, even landing on a moon."

At this point I am a creative genius! And people believe in my success. Awesome!

AB testing on Social Media

Right before writing this article I posted my creative on social media and asked people post-factum:

"Can you guess by how much adding this one image to my Product Page changed my Conversion Rate?"

iOS dev community is really great! Full of positive and supportive people. I got many answers with numbers between +7% to +500%. Here are 2 of the comments I really liked:

"(...) I think the impressions/downloads rate must have gone up dramatically.

Perhaps even a few hundred percent.

Because seeing a plain more or less standard iOS screenshot doesn't stop people in their App Store scrolling tracks."

(Wow! That's so aligned with my hypothesis.)

"I’d say it increased a lot and mostly by people that want to go to the moon.🌙😂"

(Positive and funny. I love it!)

AB testing on the App Store

Now let's find out how did the App Store test go. My AB test was active for 64 days.

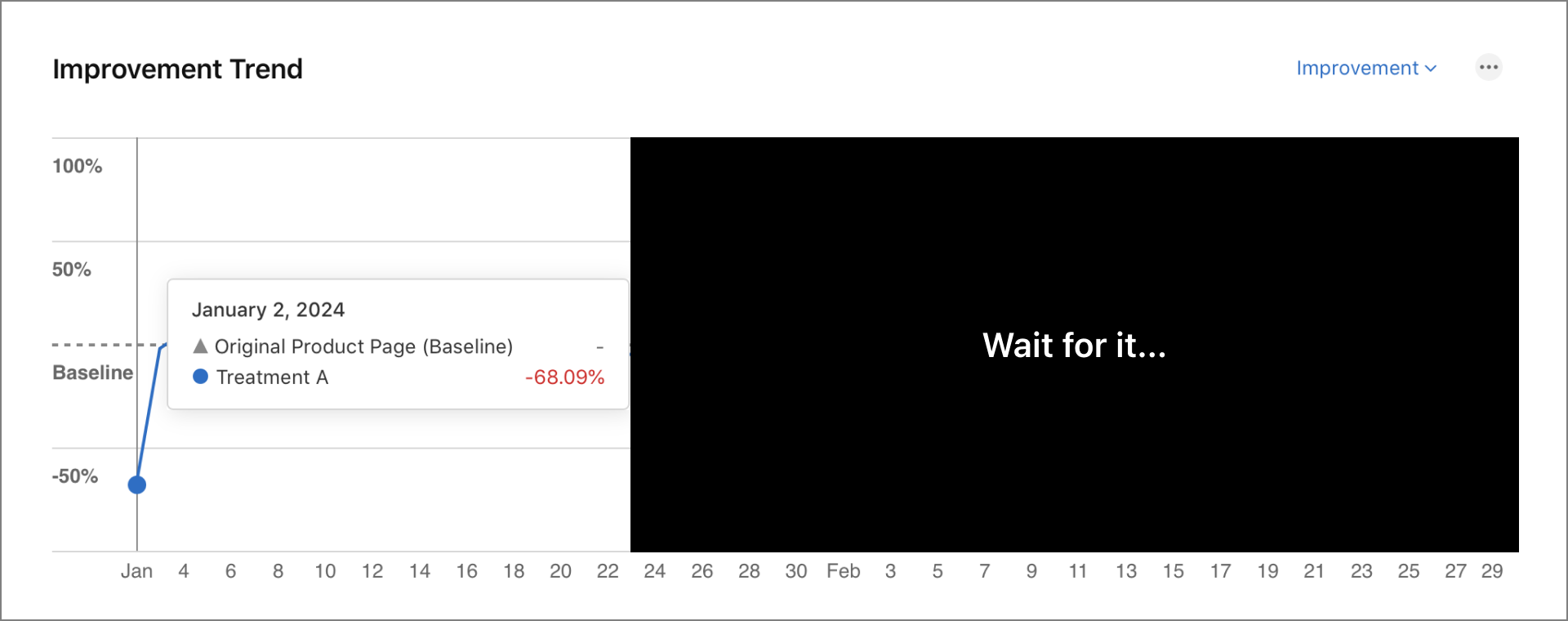

Day 1

That's strange 🤔 Conversion Rate lower by 68.09%? But no worries. It's just one day. Not enough data. It will look much better soon ☺️

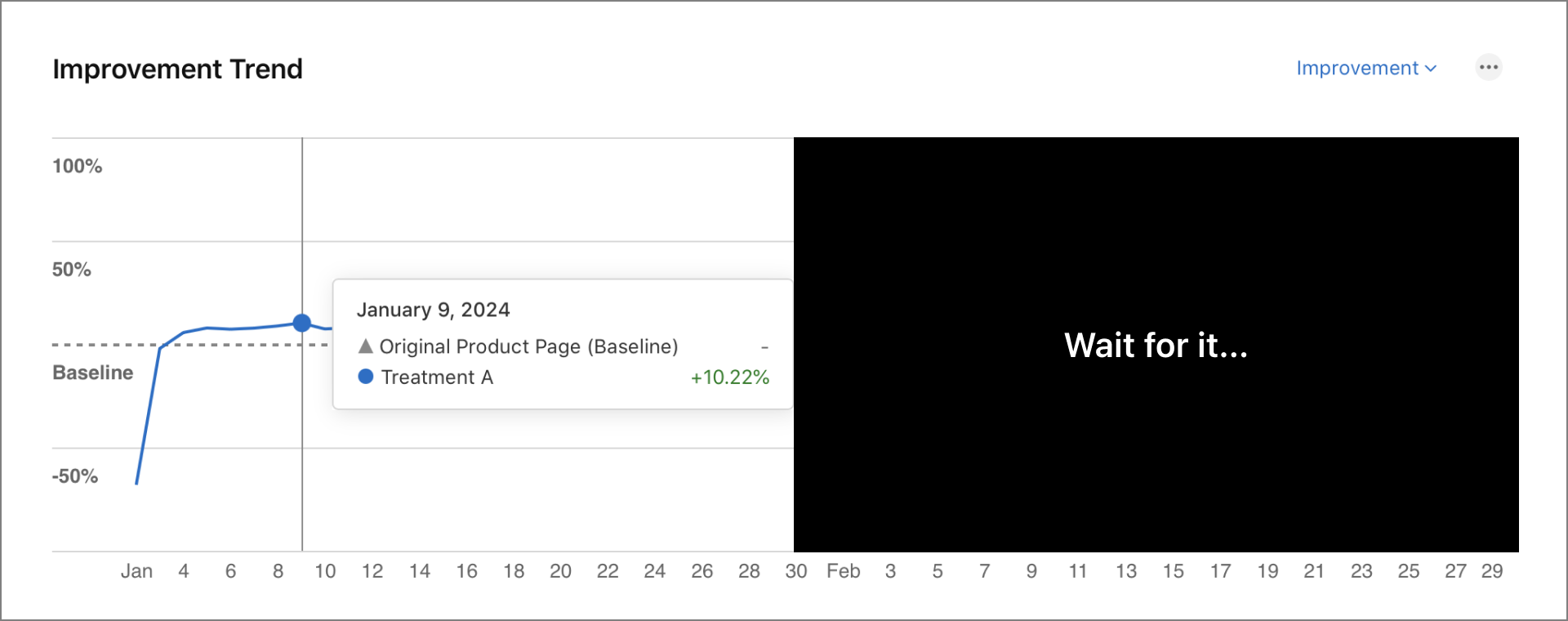

Day 7

See? 😀 I was right! After one week we have a nice improvement! +10.22% to my Conversion Rate and +10 my business skills.

One day earlier I posted my first results on social media:

"🥸 I don’t want to brag, but… I managed to improve conversion rate of my iOS app Moons by 8.78% with a… 0.1% confidence 😅"

I got a lot of support from my wonderful indie dev community. Likes, virtual high-fives. And I am sure that CEOs of big app companies were getting anxious at this point. That's one small step for an indie developer, one giant leap for the whole indie community!

But I kept testing... Because everyone ignored one part of my post:

"(...) with a… 0.1% confidence 😅"

Which basically means that I didn't have enough data yet.

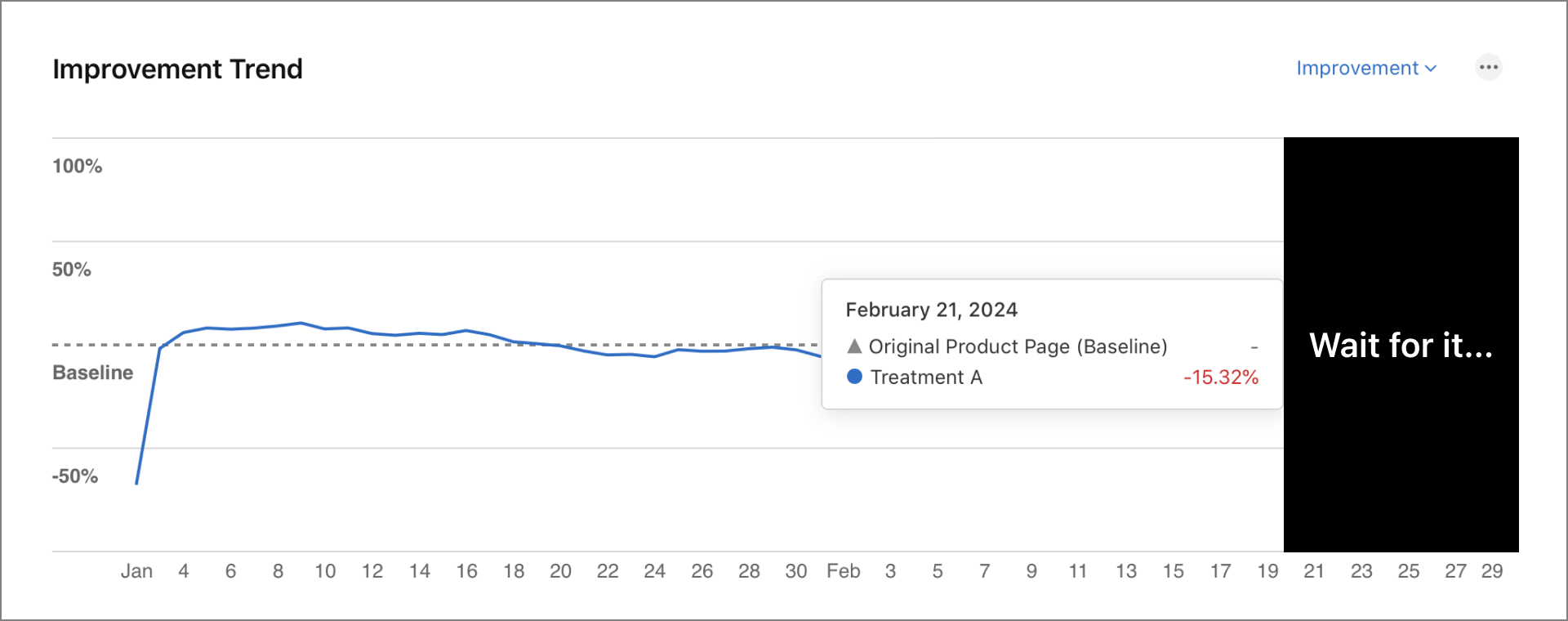

Day 51

"😱Noooooo! My numbers are going down!"

At some point my Conversion Rate went down by 15.32%. Breath, Kris! Don't panic. Stay calm and keep going.

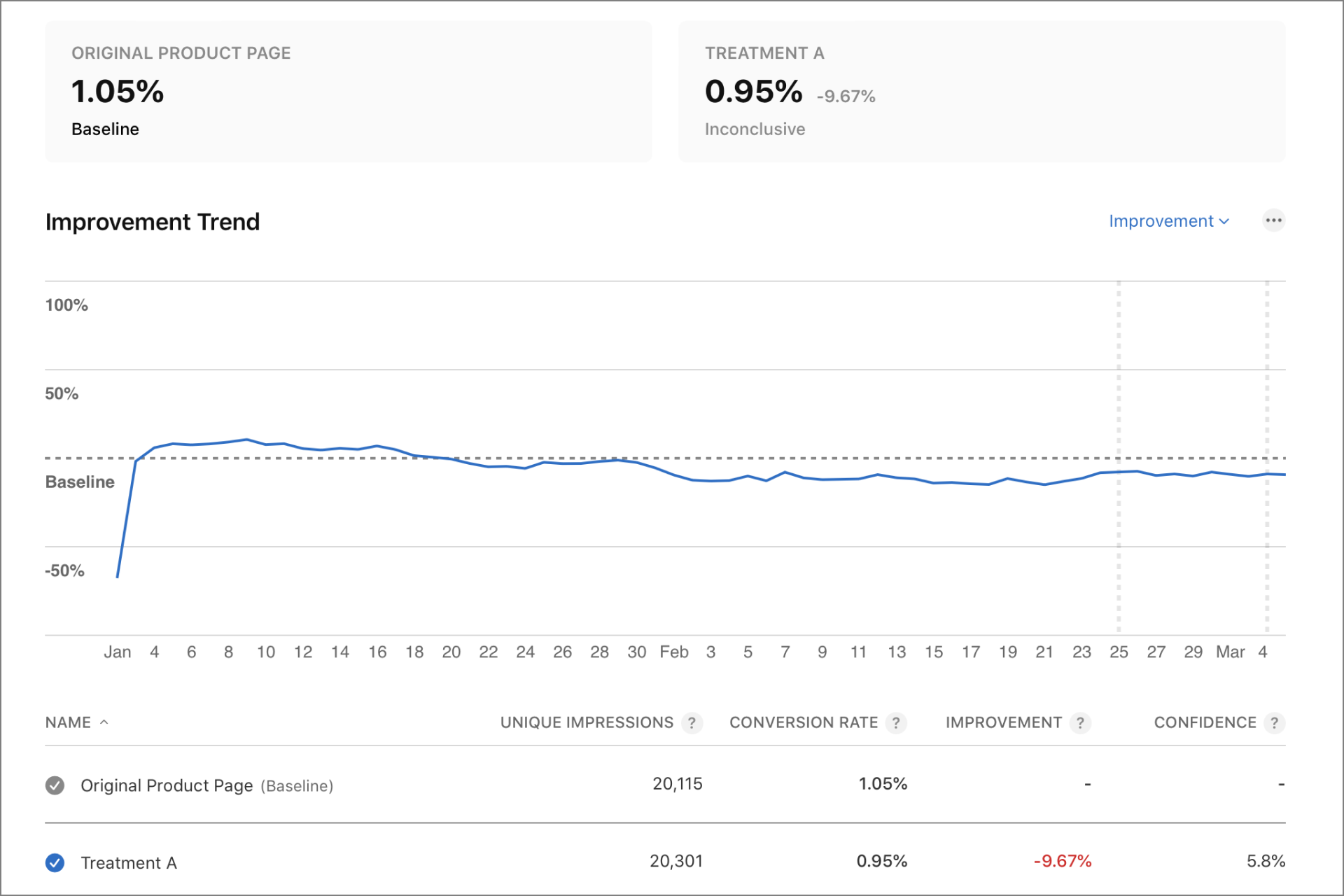

Day 64

Now that's not funny anymore! 🥲 After the initial spike there were small changes, but overall my brilliant and creative Creative (AKA Treatment A) performs worse than the Original Product Page.

I decided to stop the test at this point. Especially that I released new version of the app with new localization and new screenshots and this would only skew my results at this point.

Please note, however, that these results are marked as Inconclusive.

Apple says:

"There is not enough data to determine whether this variant performs differently from the baseline."

And I will come back to this later. But the main question is... What went wrong?!

Remember this one comment?

"I’d say it increased a lot and mostly by people that want to go to the moon.🌙😂"

This person predicted that I will have positive results, but maybe the most interesting part is this one:

"(...) mostly by people that want to go to the moon."

Maybe my creative image would work great for an Earth to Moon transportation company but not for a time tracking app? But that's not the only problem.

Problems with AB testing

Here are some key takeaways:

AB testing on friends and social media will not give you accurate results

Definitely do it! It's motivating. You will hear great ideas. Maybe you will learn from someone who already did some tests before. But you simply won't have enough data. And also your family and friends are very biased. They care about you and wish you well, so their decisions are often influenced by emotions rather than financial considerations.Confidence matters

Don't ignore this metric. My Conversion Rate increased by 10% after one week, but then decreased by 10% after two months. And yes, I am aware that my results are inconclusive. With a confidence level of 5.8%, there is nearly a 95% probability that the final results could differ significantly.You need a lot of data

And that's the main reason why I stopped the test. I can't wait another 6 months for conclusive results. I need to get more impressions first (for example, using Apple's Search Ads) and I may run the same test again in the future.Not every change is a big change

I want to run some other tests and check if making other changes will be more impactful. The bigger the change, the less data I would potentially need.Marketing images instead of app screenshots may or may not work

I need to experiment more with this idea. Whole concept may be wrong. Or just the photo should be different. Or the text/message should be different. We won't know if we will not try.

Your results may be completely different

Using the same creatives, the same images and marketing slogans you could see completely different results. Different app. Different audience. Different keywords. There are so many factors. The only way to know what works for your app is to test it.Your gut and your data are two different things

Many people say that you should trust your gut. And I agree. You should definitely hire James Gut in your company. He's such a creative guy! But if you understand his strengths and weaknesses, you will put him in the Hypothesis department along with your family and friends. You want Stephanie Data in your Business Decissions department.

Thank you for reading!

I hope you found this article useful or at least entertaining. Please follow me for more tips on how to decrease your Conversion Rate 😅

And if you want to support my work, please like, comment, share the article and most importantly...

📱Check out my apps on the App Store:

https://apps.apple.com/developer/next-planet/id1495155532

☕ If you like what I do, consider supporting me on Ko-fi! Every little bit means the world!

https://ko-fi.com/kslazinski